UPDATE: Index Coverage reports are now called Page Indexing How-To Fix Page Indexing Errors in Google Search Console Previous post which may still be useful: worry no more because RankYa has created easy to understand video tutorials for fixing all index coverage issues, errors and warnings. New Search Console Index Coverage reports are important in a sense that if Google is having problems with indexing content on a site, then, the URLs it has issues with won’t be able to show up in search.

What is Index Coverage in Google Search Console?

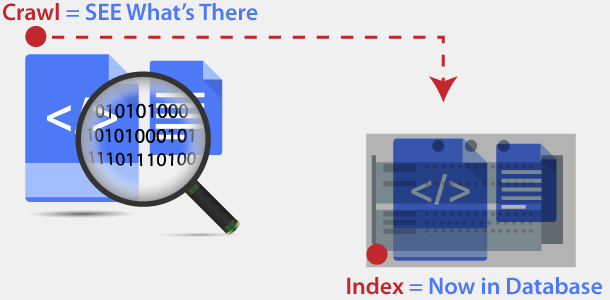

For us to be able to fix the problems, we have to actually understand what could have caused it (so that you avoid it in the future) and briefly look at what index coverage reporting shows us, let’s first look at the process Google crawling compared to indexing. Process Googlebot followed can be summoned as:

- Visit (Fetch) a URL (example: https://www.rankya.com/how-to/

- FETCHED. If so analyze the contents of URL

- Sort the contents (for example: text can go here (as in its Database for text), image file can go here (as in its Image Search Engine Database), pdf, videos etc.)

- Perform calculations to see IF the content is unique AND if its worth it to store

- Index the content including keywords and store at Google Servers

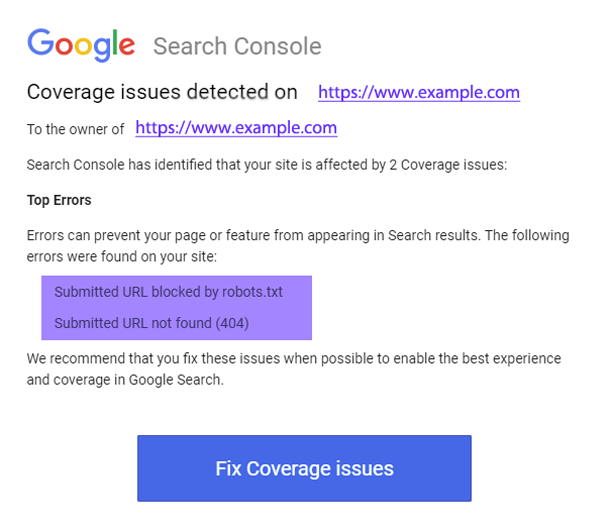

Knowing this, the XML Sitemap you’ve submitted has URLs Google is trying to fetch, but is having problems. XML Sitemap you’ve submitted has URLs Google can fetch but is having problems indexing the contents.

Difference Between Crawling and Indexing

Crawl = Fetch URL > https://www.rankya.com/how-to/

When Search Console Index Coverage shows any Crawl Errors (such as 404 Not Found or 5xx Server Errors), it basically means that Googlebot is NOT able to access the URL it is trying to access.

These types of issues are commonly related to incorrectly setup robots.txt file directives. Since Google allows options to control what you share with Google not every website is setup to allow full access for Googlebot. You can either delete robots.txt file entirely from your web server, or if you are familiar with robots.txt directives, then, you can use robots.txt tester for troubleshooting.

Index = Store Content in Google’s Database

When Google CAN access the URL but it is having issues understanding the landing page contents, this translates in to indexation issues. Often times these types of problems are due incorrect setup or optimization of web site’s HTML and unwanted URLs XML Sitemap. For example: if your website uses noindex meta tags similar to this

<meta name="Googlebot" content="noindex">

<meta name="robots" content="noindex" >

This is saying: hey Google you are NOT allowed to index this URL (whatever the URL the meta tag is located at).

All Index Coverage Errors in Google Search Console Must Be Fixed

If Google does not put the contents of a landing page in its database index, then, it won’t be able to show that content to searchers.

Looking at the example above, Google is saying hey “you’ve submitted XML Sitemap” but when we visit those URLs in your XML Sitemap, we are having problems that you need to fix.

Video Tutorials by RankYa That Explains What Index Coverage Report Is All About

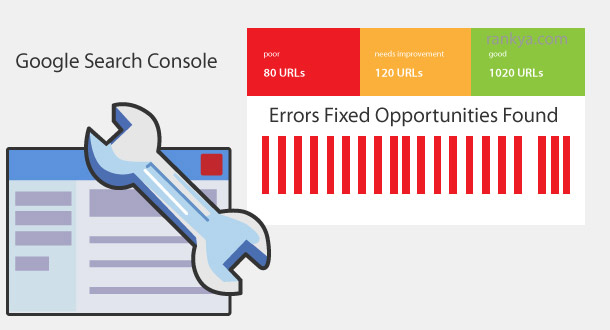

Indexing Issues in Google Search Console Means Indexing Errors for Landing Pages Which In Return Affects Google Rankings

As you now know that if Google can not index content on your website, then, it won’t be able to show it to its searchers. Critically, if the landing page you have is bringing in good amount of organic traffic each day, and, all of a sudden Google is having indexing errors can mean you lose that once enjoyed free organic website traffic. Meaning, it is the Index Coverage Errors you must fix first. Or least identify why Googlebot had, or is having issues.

How to Fix Index Coverage issues detected in Google Search Console – WordPress

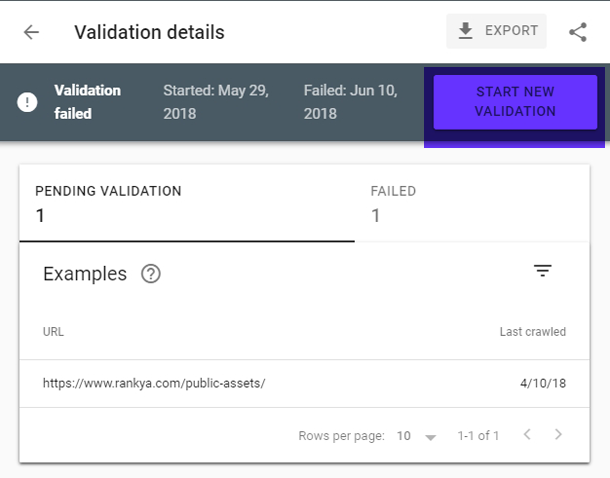

Validate But What if Validation Fails?

Once you are comfortable with troubleshooting of these errors, warnings and issues, its time to validate. Simply press on Validate button and let Google automatically check the issue. You can always press on SEE DETAILS button to see the progress for validation. Allow at least one week because if your validation is successful, the errors will no longer be shown in Search Console.

If your validation fails, although you can always re-validate issues by pressing on start new validation option, its smart to triple check your previous validation results before you re-validate. Its absolutely important to fully resolve errors before the revalidation process.

The most hot topic index coverage issue is when you are unable to fix those problems. Essentially, this how-to tutorial gives me a way of resolving with ease.

Great tutorial.

Nice information sir in the post

I’m glad you’ve found this information about search console index coverage issues helpful. RankYa maintains a full video course on Google Search Console. In fact, RankYa was the first YouTuber uploading videos explaining Google Webmaster Tools 🙂